What is Kubernetes?

Kubernetes can be thought of as a container manager and systems API. It was used internally by Google for over 15 years before being open-sourced to the public in 2014. I find the easiest way is to think about Kubernetes is to think of it as docker on steroids.

How Does Automox Use Kubernetes?

Automox has recently switched to using Kubernetes in our core infrastructure because it fits perfectly into the natural progression of increasing demands. Originally, Automox used docker containers on their own in combination with AWS metrics for insight, and bash scripts for management. By using Kubernetes, we have more control, reliability, redundancy, and insight into our product.

Kubernetes Basics

Kubernetes Clusters

In Kubernetes, the highest level of abstraction is the cluster level. A cluster can be thought of as a context or environment and allows a single developer to manage different environments or projects from one machine or account. For example, a developer may be working on one project but have a local cluster for what they are currently working on, a dev cluster for the companies project that has yet to be released, and a production cluster for seeing and diagnosing issues if/when they arise in production. The benefit of Kubernetes in this scenario is that the developer has full insight to all levels of the product without having to log into a remote vm and run commands from there.

Kubernetes Namespaces

The next level below a cluster is a namespace. A namespace is intended to group like things (pods/services/deployments) together, even if they don’t necessarily rely on each other. For example, a developer might choose to put all monitoring related things into the namespace called “monitoring”. Included in this might be pods for prometheus, grafana, etc. These are all very different things and not necessarily related, but are still an important part of monitoring.

Kubernetes Pods

Pods are where things start to get interesting. A pod contains groups of containers (explained below under ‘Kubernetes Containers’). Usually, a pod is considered to be a “primary” container, and if needed, containers referred to as sidecars or helpers. For example, you may have your “example-app” running in a container in the pod, and you might also have a second pod that holds a MySQL database. Let’s say you want to store temporary data in the “example-app” pod, but don’t want it to take up space on your database pod. Here, you could choose to have a second container on the “example-app” pod running its own MySQL database in it that can only be accessed by the “example-app” in that pod.

Kubernetes Containers

If you know how to use docker containers, then you already know how they work in Kubernetes. Although Kubernetes supports other container runtimes, docker is most widely used.

Kubernetes Example

This example assumes you have already set up a Kubernetes cluster, and therefore have kubectl installed and either minikube (if you have Linux) or docker-4-desktop (for Mac).

First, let’s create our “app” pod. This will hold only one container, which in our case will be an NGINX web server.

nginx.yaml:

|

apiVersion: v1

kind: Pod metadata: name: example-nginx labels: app: super-cool-app spec: containers: - name: super-cool-app image: nginx:latest imagePullPolicy: IfNotPresent ports: - name: http containerPort: 80 |

Let’s walk through some of the most important properties in more depth:

- Name: This is the name of the pod.

- Labels: These are arbitrary key/value pairs that can be used by selectors. In this case, I have added one key/value of app/super-cool-app.

- Containers: This is a list of containers. I have only specified one, but many more could be added.

- Container.name: This is the name of the container. If there are multiple in one pod, this is very useful. In this particular example, however, it is not.

- Container.image: This is the docker image. In this case, I have chosen nginx:latest from the docker hub. I could use any image, including those hosted in ECR or locally.

- Container.ports: This is a list of ports exposing the rest of the container(s) in the pod. Only the port number is required, but there are other optional variables such as “name”, as shown above.

Next, we need to set up our app yaml file. To deploy it, simply run: “kubectl apply -f nginx.yaml”. The next step is to wait for it to enter “running” status. To check the status, enter: “kubectl get pods”.

Once the status shows “running” we can move on to the next step:

The “1/1” section indicates that 1 out of 1 containers inside the pod are ready.

We can verify that it’s working by using a Kubernetes command to port forward: “kubectl port-forward example-nginx 80”.

This command means that I want to forward all traffic from my computer to port 80 of the pod, which in this case was exposed in the pod. Navigate to “http://localhost” in your browser to see the default nginx welcome page.

Now, we will deploy the pod that will hold the MySQL server. If you have ever used MySQL, you likely know that by default the server binds to the local IP address (127.0.0.1). This makes it impossible to access outside of the container. To resolve this and allow access from other containers there are a few steps we need to take. First, it needs to bind to 0.0.0.0. To accomplish this we could make our own Dockerfile and copy it there, or we could use a “ConfigMap” in Kubernetes. For this exercise, we will choose the latter option run a file called “mysqld.configmap.yaml”:

|

apiVersion: v1

kind: ConfigMap metadata: name: mysqld-configmap data: mysqld.cnf: | # Copyright (c) 2014, 2016, Oracle and/or its affiliates. All rights reserved. # # This program is free software; you can redistribute it and/or modify # it under the terms of the GNU General Public License as published by # the Free Software Foundation; version 2 of the License. # # This program is distributed in the hope that it will be useful, # but WITHOUT ANY WARRANTY; without even the implied warranty of # MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the # GNU General Public License for more details. # # You should have received a copy of the GNU General Public License # along with this program; if not, write to the Free Software # Foundation, Inc., 51 Franklin St, Fifth Floor, Boston, MA 02110-1301 USA # # The MySQL Server configuration file. # # For explanations see # http://dev.mysql.com/doc/mysql/en/server-system-variables.html [mysqld]

pid-file = /var/run/mysqld/mysqld.pid socket = /var/run/mysqld/mysqld.sock datadir = /var/lib/mysql #log-error = /var/log/mysql/error.log # By default we only accept connections from localhost bind-address = 0.0.0.0 # Disabling symbolic-links is recommended to prevent assorted security risks symbolic-links=0 |

Properties of interest here include:

- Kind: ConfigMap. This tells Kubernetes that this resource is a configmap.

- Name: This is the name of the configmap and how we will select it later.

- Data: This holds the information for the configmap in key/value form. We will only have one piece of data: “mysqld.cnf”.

Apply this configmap with “kubectl apply -f mysqld.configmap.yaml”.

Now, let’s make the MySQL pod that will use this configmap in the file “mysql.yaml”:

|

apiVersion: v1

kind: Pod metadata: name: mysql-db labels: app: super-cool-app spec: containers: - name: mysql-db image: mysql:5.7 imagePullPolicy: IfNotPresent ports: - name: mysql-port containerPort: 3306 env: - name: MYSQL_ROOT_PASSWORD value: qwertyuiop1234 volumeMounts: - mountPath: /etc/mysql/mysql.conf.d/ name: mysql-conf volumes: - name: mysql-conf configMap: name: mysqld-configmap items: - key: mysqld.cnf path: mysqld.cnf |

Properties of interest here include:

- Labels: I have given it the same label as the nginx pod because these two pods are the same app. This is useful when querying for all pods related to a certain app (i.e.: kubectl get pods -l app=super-cool-app).

- Containers.image: Unlike the nginx pod where I used the nginx:latest container, I wanted to lock the version of MySQL to 5.7. Therefore, I specified the tag “5.7” (available here).

- Ports: I exposed port 3306 to the rest of the pod. This is the default port for MySQL.

- Env: I have set the root password for the database. This is required, and specified on the docker hub page above.

- Volumes.name: This is the name of the volume I am going to mount to the pod. Once mounted, I can mount it to a specific location of a container using this name.

- Volumes.configmap: This indicates that this is a configmap configuration.

- Volumes.configmap.key/path: This is the path to use from the configmap, and the path to load it under. The key must match the name of the configmap previously discussed, and the path will be the file name it loads into.

Now, let’s deploy this pod with “kubectl apply -f mysql.yaml”.

Verify that the two pods are running successfully:

Just like in a plain docker image, we can exec into it using the eerily similar command: “kubectl exec -it example-nginx bash”.

Now that we are inside the nginx pod, let’s install some utilities we will use:

<span style="font-weight: 400;">apt update && apt install -y inetutils-ping mysql-client</span> |

<span style="font-weight: 400;">If we were to try to access the MySQL server at this time, we would throw an error. This happens because even though we exposed port 3306 on the MySQL pod, it is only exposed to other containers inside that pod, and not to any others.</span>

To fix this, we need to create a Kubernetes service. The most basic service type is “ClusterIP”. A cluster IP means that other pods can see it, but it is not exposed externally.

mysql-service.yaml:

|

kind: Service

apiVersion: v1 metadata: name: mysql-service spec: selector: app: super-cool-app ports: - protocol: TCP port: 3306 targetPort: 3306 |

Properties of interest here include:

- Kind: Service. This is the Kubernetes resource.

- Name: This is the name of this service. The name will be important later on because it forms an A Record that other pods can use.

- Selector: This is why specifying the labels in the MySQL pod was important. The selector tells it which pods to apply this to. In this case, it applies to any pod that has the app key with the value “super-cool-app”.

- Ports: This identifies which ports to expose. Only the port is required, but targetPort specifies which port to expose it to other pods with. This is useful if you have multiple databases running.

Apply it with: “kubectl apply -f myql-service.yaml”.

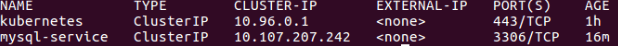

Verify that it’s working with: “kubectl get services”:

Now, let’s verify that we can access the MySQL database from the nginx pod. Log into the pod with “kubectl exec -it example-nginx bash”.

Once in, run “mysql -h mysql-service -u root -p”. When prompted, enter the password given by the env variable. In our case, the password is qwertyuiop1234. You should now be able to successfully login and run SQL queries!

It’s important to note here that we are able to use “mysql-service” as our hostname because that is the name we assigned to the service that exposed the MySQL server.

In the next Kubernetes installment, we will cover the steps to take to get started with Kubernetes at the enterprise level.

About Automox

Facing growing threats and a rapidly expanding attack surface, understaffed and alert-fatigued organizations need more efficient ways to eliminate their exposure to vulnerabilities. Automox is a modern cyber hygiene platform that closes the aperture of attack by more than 80% with just half the effort of traditional solutions.

Cloud-native and globally available, Automox enforces OS & third-party patch management, security configurations, and custom scripting across Windows, Mac, and Linux from a single intuitive console. IT and SecOps can quickly gain control and share visibility of on-prem, remote and virtual endpoints without the need to deploy costly infrastructure.

Experience modern, cloud-native patch management today with a 15-day free trial of Automox and start recapturing more than half the time you're currently spending on managing your attack surface. Automox dramatically reduces corporate risk while raising operational efficiency to deliver best-in-class security outcomes, faster and with fewer resources.

)